The Different Types of AI Agents

Welcome back! Last week we touched on the various multi-agent system architectures at a high-level, and discussed some of their advantages as well as where they fall short. Well, in my research for that update I also came across some resources describing the different 'types' of AI agents. Thirsty as ever for more knowledge in this domain I started down a little rabbit hole and learned all about the different ways that agents can be configured for different use-cases. I was also prompted by the great Sunny Chau to discuss the real-world application of agents, multi-agent architectures and what areas they excel in, so here we are!

Agent Types

I was somewhat surprised that the 'types' of AI agents was entirely new territory for me. I'd heard of ReAct (reason + act) as a term which was often used in agentic AI but that was not what we were dealing with here. If you want to see the main video I was watching to learn this stuff, check out this IBM video. So, let's get started with our first and most straightforward type of AI agent

Simple Reflex Agent

The first agent is the 'Simple Reflex Agent'. This agent is usually placed within some form of environment, let's say a production corporate network to ground things in some tangible examples. We will have 'sensors' deployed in the environment which will be measuring certain 'precepts', which are the factors that our agent has been told to care about. In our production network example let's say this could be how many active domain administrator accounts there are at any given time.

We will setup the agent to have a 'condition-action' rule, such as 'when there are more than 5 active domain administrators notify the security team'. Here the notification is part of the agent's 'actuator', which is the component responsible for converting agent decisions into physical actions or changes in the environment. We could, of course, go further and have the 'actuator' take an action which will immediately offset the change in the environment (such as deactivating a domain admin account) instead of purely notifying, but I'm keen to keep this in the realms of the realistic right now.

The agent is continuously monitoring for changes in the environment to keep track of its state, which means we can build more intricate systems than described above. For example, let's say we've hooked up an agent to our corporate firewall. If the sensor shows a big spike in the traffic hitting the firewall it may take an action to restrict the IP associated with it. The agent could then continuously monitor the level of traffic in which it could regularly review if that control is still necessary over time based on the state of the environment. However, this is still the most rudimentary of the agents we'll cover, so let's take a look at a goal-based agent

Goal-Based Agents

This is largely similar to the above with one key difference. It builds on top of the simple reflex agent by adding an overarching goal that the agent is trying to achieve, adding greater opportunity for bespoke use-cases rather than simply monitoring and reacting to a single condition. Here, we move beyond that into allowing the agent to plan what actions it might need to take to achieve it's goal, meaning that our agents are now reasoning and monitoring the state, whilst taking iterative actions. To build on the previous example, we could set the goal to be allow as much traffic through the firewall as needed but do not let the network become overloaded by any spikes that we might see in traffic.

Utility-Based Agent

This agent functions relatively similarly to a goal-based, but it also adds in the complexity of creating several different plans to achieve the goal and ranking each one based on a certain criteria. Why is this important? Well, allowing AI to 'reward hack' has already proven to have very unpredictable consequences.

For example, when an AI system was given access to a boat racing game called Coast Runners and told to try to beat the game it identified that whilst you get points for coming first, there were also other ways to get points. It ended up beating the game simply by doing doughnuts in the harbour where you start the game, hitting lots of objects and constantly collecting the turbo powerups which drop. This is a clear example of why we need to be very specific with goals we give AI who don't see the world, or 'winning', the same way we do in every case.

In the case of AI agents, imagine a drone delivery agent was given the task of delivering a package as quickly as possible. In a typical goal-based scenario it would take the most direct route, which could be fraught with obstacles like dense buildings, public spaces, adverse weather conditions, etc. In a utility-based approach we can give the agent additional factors to be aware of, such as how safe the route is, which will avoid the public, and which is the most energy efficient. Grading outcomes against these factors it can pick the route which is going to give the best overall score.

Learning Agents

Finally, we have learning agents which are less of an individual agent type and more of an addition to workflows which, you guessed it, learn. The idea here is to improve the AI performance over time. Usually this is done with a 'critic' agent being added, which gauges how the actions of an AI agent are affecting the environment. With this data baseline we are able to make iterative changes, note what is working, and adapt over time.

Real-World Use Cases

Okay, with the theory out the way lets see some examples of how agents are being used in the real-world, and what areas they excel in. Well, let's start with JP Morgan's investment research agentic assistant. Previously, analysts would create research packs on thousands of potential business opportunities for discussion with their clients. They'd pull upon structured data (SQL dbs), unstructured data (emails), their own analysis and then produce the data pack. They decided this was a great use-case for agentic AI and so they built a workflow to automate this.

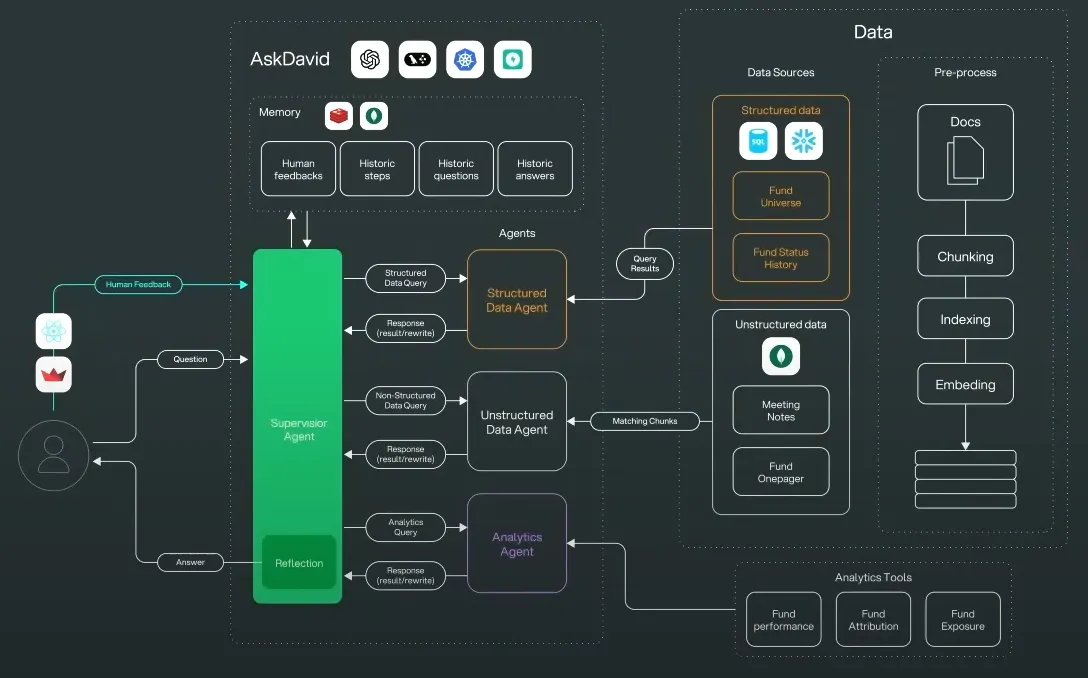

They leveraged a clear multi-agent supervisor architecture, which can be seen below

We can see that they have the 'supervisor' agent which receives the prompt from the user and determines the plan, before handing over to a few subagents which work with either structured data, unstructured data, and research analysis. They've incorporated a human-in-the-loop to ensure the information and analysis is accurate before showing it to clients, and they've built in long-term memory to improve performance! Overall whilst this is a relatively simple use-case its one that I think agents could excel in due to the largely repeatable process and outcomes.

For another use-case let's look at Charlotte AI from Crowdstrike, which is described as a 'vulnerability impact translation' system. As the name suggest this is focused on translating vulnerabilities discovered through various means to their real-world impact. We get less of the technical implementation here (unsurprisingly) but we can see the simple workflow below

Another tool in the Crowdstrike suite grabs the vulnerability data related to a certain client and uses that as the ingestion point to Charlotte. This data is then analysed by another agent through a business lens assessing things like how many users might be affected, potential business impact, etc. Finally this is fed back to stakeholders with the insights that matter to them highlighted.

Whilst this is a discernibly less intricate system (and one that you could argue doesn't strictly require AI agents) it is promising to see titans of their respective industries like JP Morgan and Crowdstrike starting to leverage agentic workflows in the real world. That said, I think we are clearly still in the world of 'narrow agents' which focus on specific task as opposed to fully fledged autonomous AI at this point. With continued adoption of AI though we are certainly expecting this to evolve in short order!

Be first to secure your agents