Data Private AI Deployment Options

AI and data privacy are generally at loggerheads. For many, this is a concern which has hitherto put them off using AI, but some conversations recently have made me realise that still others do not understand that by using AI they are sending their data, sometimes very sensitive data, off to third parties where it may be locked away into training data forever. Today, I thought I’d explain what happens to your data when using AI and the 3 main options most people have to consider. These range from the least ‘private’ to completely data sovereign deployment options, and I hope to leave readers with a clearer view about which option might be best suited for their use case.

So, before we get started about the various ‘non-standard’ options lets start by walking through what happens when most of us use AI day-to-day. Here, I am not talking about building with AI or using it at enterprise scale, but just using ChatGPT for daily queries. This will give a baseline of what the least data ‘private’ option looks like in reality, and then we can talk through various ways we can more AI more ‘private’.

It’s worth clarifying at this point how I am using the term data ‘private’ here. Generally, I am comparing factors like how many third parties get access to your data, what type of third parties, how censored your data is when they receive it, if they store your data, if you have control over your data, etc. Each of these factors is treated differently in the various deployment options we will discuss today, and combined these give us our various potential options that are suitable.

Let’s start with just using ChatGPT for day-to-day tasks or a simple product which uses ChatGPT under the hood, which we will call ‘vanilla’ AI deployments. This is a term which is often used in tech for describing something which is being used in its standard, unaltered configuration. No special changes, requests, or agreements in place.

Vanilla AI Deployments

To allow us to compare these options I’ll cover each deployment method against the same criteria, including a high-level summary and then answers to each of the factors that I consider important here.

Summary: in vanilla AI deployments we are generally interacting directly with the AI provider, or doing so through a product which basically acts as a wrapper. We send our queries to their models and get our responses and typically surrender control of that data. Whatever we put in can no longer be considered ‘private’ any meaningful sense.

Who has access to your data? The AI providers (OpenAI, Anthropic, etc.) and any third parties, such as product companies, that you are consuming. For example, you either buy or build a helpdesk AI software which streamlines repetitive tasks. In this scenario a general assumption should be that the helpdesk product company (if you buy) and the AI provider they/you are using in the product has access to your data. Depending on their policies, they may also share certain metadata with partners for monitoring, abuse detection, or improving services. In effect, at least one external third party always has access.

Is your data stored by third-parties? By default yes. If you don’t change any settings, most AI providers log your prompts and responses by default, typically keeping them for at least 30 days for abuse and security monitoring, but often longer if they’re also used for research or model training. On free or consumer accounts, your data is usually fair game for improving models which is a problem, as once your data is used to train a model, it becomes “absorbed” into the training data the model has learned. You can’t realistically extract or delete a specific prompt afterwards.

Do you have control over your data? Minimal to none - as mentioned there are some settings that you can opt out of and slightly improve data privacy and related concerns, but ultimately surrendering the data is a price you pay for using AI. If you are talking about enterprise plans there may be more and stricter settings that you can change, such as not using your data to train models, which is a small win.

Managed AI Platforms (Azure OpenAI & Amazon Bedrock)

Summary: managed platforms are a sort of middle ground. The way they work is that the managed platforms, usually either Microsoft or Amazon, host their own versions of the frontier AI models (GPT-5, Claude 4, etc.) and give you access to them, usually with a lot more data privacy options like never sending your data to the AI providers themselves. So, why is this any different to vanilla or sending your data to the AI providers directly? Well, the advantage here is that most companies already trust either Amazon or Microsoft with their data. Think, for example, of how many companies use MS Teams, SharePoint and OneDrive - in the cloud era most companies have risk accepted that Microsoft and / or Amazon will house their sensitive data, and this option allows them to get the latest and greatest AI without having to risk accept sending their data off to another third party, which most orgs do not do lightly.

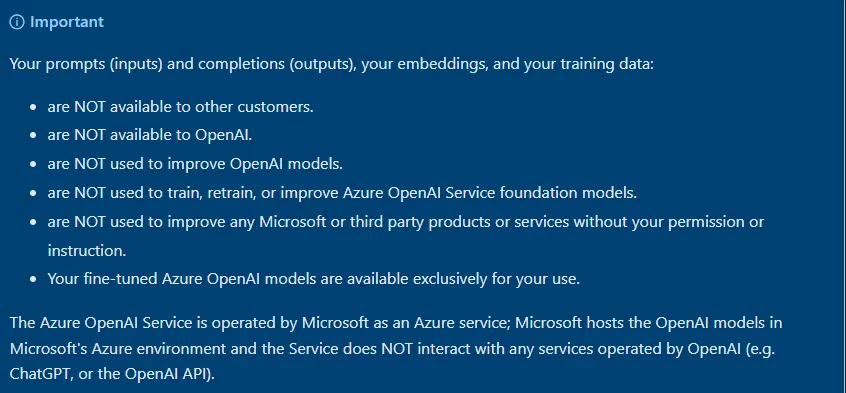

Who has access to your data? In most cases, only the managed platform provider like Microsoft or Amazon have access to your data. This is actually something which they say loud and clear in several places, as it is a great marketing tool. See this call out box on the Azure OpenAI front page

A similar declaration can be seen in Amazon’s ReInvent 2024 talk:

Is your data stored by third-parties? By the managed platform providers, yes, but by additional third parties, no. As mentioned, this is something which has often already been risk accepted, and there are far more data privacy controls available to you here, including limiting the storage of data as much as possible.

Do you have control over your data? not complete control, but much more. For example, you can specify that you want Microsoft to only use models and storage in the same country that you are in, meaning cross-country data sharing isn’t taking place. You can specify where your data is processed, if it is used in training, how long it is retained, and in some cases enforce “no logging”. You still don’t control the underlying model or the platform itself, but you get contractual guarantees and compliance guardrails.

Open-Source Local Models

Summary: finally, we have the most ‘data private’ option - local open-source models. This is where you never send your data off to AI providers or managed platforms, but bring the AI to you. You can do this by using different models which are open-source, meaning that anyone can download, setup and run the model themselves for free. They can even be run without an internet connection or in an air-gapped network, so there is no possible way of the data leaving your environment. However, there is a trade off. Open source models tend to lag several years behind frontier models in their performance, and require you to have the technical know how to setup, maintain and patch these models. Furthermore, you’ll often need to run these models on very large machines which comes with its own cost, even if the open-source models themselves are free.

Who has access to your data? Just you. No third party has access to your data.

Is your data stored by third-parties? Nope!

Do you have control over your data? Total control - you dictate the terms of data handling in its entirety.

Conclusion

I hope that this was inciteful and not touching you all how to suck eggs. It still amazes me though how many orgs using AI don’t understand these options, or what is happening to their data. Personally, we at Secure Agentics have always and will always build AI using managed platforms, with strict agreements in place regarding data privacy. I’d urge many of you either building or buying AI to consider doing the same, or going down the local model route if you can pull it off.

Be first to secure your agents