ChatGPT Health Concerns

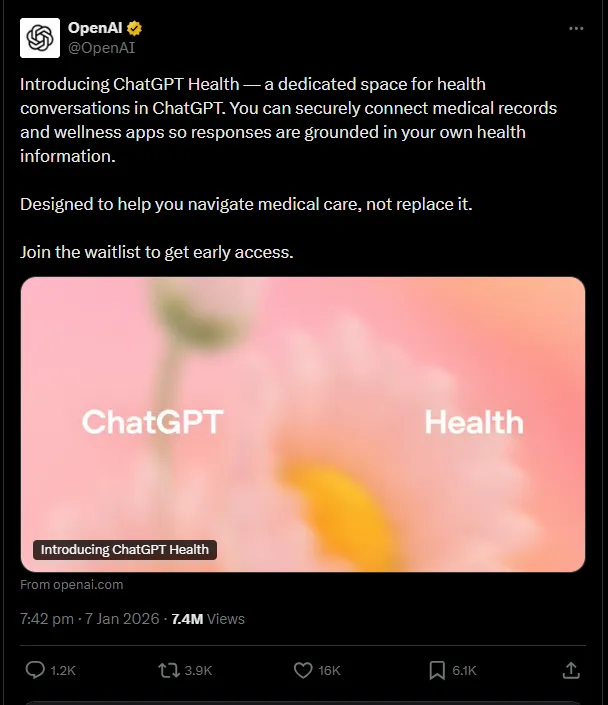

A few days ago a friend of mine put this on my radar

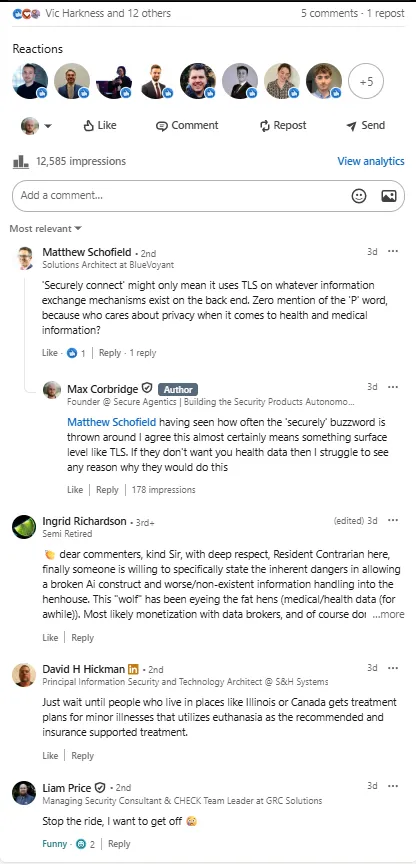

I immediately shared this on my LinkedIn where the sentiment that this was something that could be pretty dangerous was echoed by other security practitioners:

I knew when I saw this I was going to write a dedicated blog post about it, but having since done more research into what this is offering I wanted to provide, perhaps in vein, a more balanced view point on it. As such, I will actually begin by talking through the positive side of this, before inevitably shooting that all down in the second half of the blog.

Why this isn't a terrible idea

I needed to do a bit more research on this before I tore it apart, so I tried to sign up for the service. Sadly it isn't available in my region yet, and even when magically appearing to come from the US the service was available but only to join a waitlist, so I'll have to take my info from this page.

Now, the first thing I want to mention is that for better or worse, people are already using ChatGPT for health queries. According to OpenAI it is actually 'one of the most common ways people use ChatGPT, with hundreds of millions of people asking health and wellness questions each week'. From my own usage of AI and how I know others use AI, this doesn't entirely surprise me. I recently shared that in 2025 I ended up using ChatGPT once-per-productive-hour-of-the-day for every single day of the year. That is a lot of ChatGPT'ing (no, thats not a verb yet) and whilst I can't recall many specific examples I'm sure that there were a few health or wellness related queries in that mix.

What I am getting at here is that if people are already using AI for their health queries (which they shouldn't.but they are) then why not better serve them with a product which has more specific domain knowledge, useful features and was actually built with over 260 physicians across 60 countries?

Next up is that healthcare, especially tailored advice for you specifically, is not always easy to come by. Here in the UK healthcare is free, but it can take some time to get appointments. There are many countries around the world where healthcare is not free, and many cannot afford it. However, they may be able to afford internet and, assuming this isn't paywalled upon release, that may be enough to get access to some decent healthcare advice for them specifically, and instantly. It is probably no surprise that this is launching first in the US.

Another 'good' point is that, for better or worse, this is almost certainly the direction AI and society is headed as it is a compelling use case for AI. One of the promised lands of AI are those futuristic humanoid robots doing chores around the house and looking after the elderly. Sidenote, whilst its a bit of a sham of a company there is some movement (I wouldn't yet call it progress) on this front. AI's ability to become an expert in a particular domain, and then have infinite patience, capacity and availability to serve users has always marked it as a huge opportunity for healthcare, and really this is just the natural evolution for LLMs.

Why this is a terrible idea

Now that is out the way and with me feeling slightly better about not being such an AI doomsdayer, let's explore why many of us in the security community are concerned about this.

First, historically we have been very careful to avoid AI giving out healthcare and financial advice. This was not by accident, but because we understand that AI is far from perfect in it's current form. AI providers even go as far as reminding you of this every single time you use AI:

Therefore, it makes sense that when it comes to things that are incredibly important or sensitive like your health or finances we've (smartly) said 'not yet'. In my mind this boils down to 2 technical flaws in AI which mean it's not really fit for unsupervised advice in these areas. Firstly is that AI can and does hallucinate. We've already covered this in this newsletter so I won't go deep now, but essentially AI is always just guessing the right answer to the question. Most of the time it is pretty good at this, but it still makes mistakes and confidently tells you the answer to a question is something which is entirely made up.

In a non-sensitive environment and with labels saying that the answers should not be fully trusted this is acceptable, but not for what we're now trying to do. Secondly, LLMs are systemically vulnerable to prompt injection, which can be used to misalign them. We've covered this in previous blog posts too. Why is this important here? Through prompt injection - which I'll remind you is a problem that we have no way of solving right now - you can 'hack' an LLM and take control over it's goals and responses. Specifically, you can get it to return any sort of content, including harmful content. Historically this was always 'how do I make a bomb' which was just an example of what could go wrong, but you could very easily see how in the context of healthcare advice, especially mental health, this could be disastrous.

Next up is a data problem. Just a few weeks back we covered on this newsletter how OpenAI (through Mixpanel) suffered a data breach in which they lost customer data. Now, they either did a very good job hushing this up or people don't really seem to care, but it does start to draw our attention to something.

AI providers like Anthropic and OpenAI are seeing huge adoption. ChatGPT alone has an estimated 800 million weekly active users, and sees over 2 billion daily queries. This spans across the globe, across different sectors, and all age groups. Given that, by default, OpenAI can train their models on your data unless you opt out this means that they're harvesting astronomical amounts of data every day. Add into this the fact that many users, especially in enterprise, don't understand what they should and shouldn't be putting into AI chatbots and not only is the amount of data unlike anything we've seen before, but so is the sensitivity of data.

This is already a concern, and puts a big red cross on all of the AI providers back's from the cybercrime perspective, but one of the lines we've not yet crossed is connecting additional personal data sources to these providers. Not only 'personal' data, but some of the most sensitive data that exists: our healthcare records. There are many regulations around the world that put very strict rules on anything that touches healthcare data for the fact that this is such sensitive and personal information that should be treated with the utmost care. If you have dealt with trying to get access to your own (at least in the UK) you'll understand this pain.

The idea, then, of connecting all your medical records to ChatGPT has huge implications and is not something that we ever do lightly. Multiply this by the amount of users that OpenAI have and we're talking about a centralisation of medical records in one place on a scale unlike anything we've ever seen before. This on top of the certified treasure trove (for attackers that is) of data which OpenAI already have, and we're getting to a data / power concentration that is truly terrifying.

Regarding the training of models on this data, there is little said specifically here. We hope that this would follow the same opt-out rules as for regular ChatGPT, but I'm willing to bet OpenAI are hoping not many people know about that. Specifically asking people to connect medical records feels, to me, like an attempt to grab as much healthcare data as possible for training. Remember that in 2025 OpenAI signed huge contracts with governments and healthcare providers which may or may not have something to do with it.

Undoubtedly there are more concerns with this move from OpenAI, but for now these are the major ones that come to mind. Leave a comment down below if you think I've missed anything, and I'll catch you next week!

Be first to secure your agents